The DUTS Image Dataset

Table of Contents

What's New

- Dataset has been updated We found some errors in our original dataset files. We have corrected these errors and updated the dataset. Please re-download the dataset. Otherwise, you can also corrected the errors manually as below

- Go to DUTS-TE/DUTS-TE-MASK, delete files ‘mILSVRC2012\(\_\) test\(\_\) 00036002.jpg’, ‘msun\(\_\) bcogaqperiljqupq.jpg’

- Go to DUTS-TR/DUTS-TR-Mask, delete files ‘ILSVRC2014\(\_\) train\(\_\) 00023530.png’ , 'n01532829\(\_\) 13482.png' and 'n04442312\(\_\) 17818.png'. Convert ‘ILSVRC2014\(\_\) train\(\_\) 00023530.jpg’ , 'n01532829\(\_\) 13482.jpg' and 'n04442312\(\_\) 17818.jpg' into png format and remove original jpg files.

Introduction

Saliency detection is originally tackled by unsupervised computational models with heuristic priors. Recent years have witnessed significant progress made by learning based methods, in particular, deep neural networks (DNNs). As more sophisticated network architectures have been designed, existing data sets, that are originally built for evaluation rather than training with inadequate amounts of samples, become unsuitable for training complex DNNs. Besides, there does not exist a well-established protocol for choosing training/test sets. The usage of different training sets in different methods leads to inconsistent and unfair comparisons.

Motivated by the above observation, we contribute a large scale data set named DUTS, containing 10,553 training images and 5,019 test images. All training images are collected from the ImageNet DET training/val sets [1], while test images are collected from the ImageNet DET test set and the SUN data set [2]. Both the training and test set contain very challenging scenarios for saliency detection. Accurate pixel-level ground truths are manually annotated by 50 subjects.

To our knowledge, DUTS is currently the largest saliency detection benchmark with the explicit training/test evaluation protocol. For fair comparison in the future research, the training set of DUTS serves as a good candidate for learning DNNs, while the test set and other public data sets can be used for evaluation.

Copyright claim of the ground-truth annotation

All rights reserved by the original authors of DUTS Image Dataset.

Contact information

This website is maintained by Xiang Ruan ( ruanxiang at tiwaki dot com ), if you find bug or have concern about this website, shoot me an email.

For information about the dataset, please contact:

| Name | Affiliation | |

|---|---|---|

| Huchuan Lu | lhchuan at dlut dot edu dot cn | Dalian University of Technology |

| Xiang Ruan | ruanxiang at dlut dot edu dot cn | Dalian University of Technology |

Download

- Please download dataset from below links

Please cite our paper if you use our dataset in your research

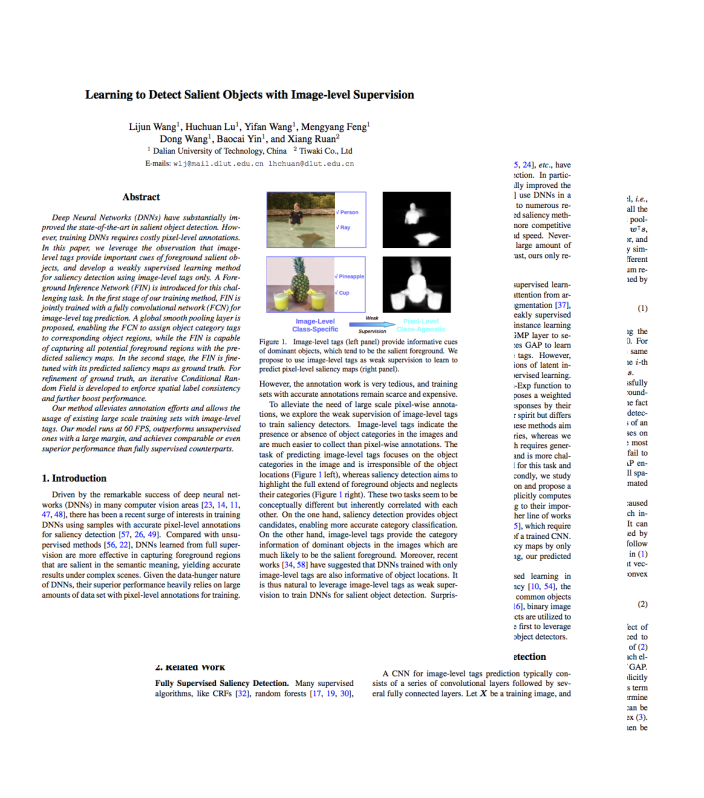

Lijun Wang, Huchuan Lu, Yifan Wang ,Mengyang Feng, Dong Wang, Baocai Yin, Xiang Ruan,

"Learning to Detect Salient Objects with Image-level Supervision", CVPR2017

Lijun Wang, Huchuan Lu, Yifan Wang ,Mengyang Feng, Dong Wang, Baocai Yin, Xiang Ruan,

"Learning to Detect Salient Objects with Image-level Supervision", CVPR2017

And please find source code at https://github.com/scott89/WSS

or by bibtex

@inproceedings{wang2017, title={Learning to Detect Salient Objects with Image-level Supervision}, author={Wang, Lijun and Lu, Huchuan and Wang, Yifan and Feng, Mengyang and Wang, Dong, and Yin, Baocai and Ruan, Xiang}, booktitle={CVPR}, year={2017} }

References

- J. Deng, W. Dong, R. Socher, L.-J. Li, K. Li, and L. Fei-Fei. Imagenet: A large-scale hierarchical image database. In CVPR, 2009

- J. Xiao, J. Hays, K. A. Ehinger, A. Oliva, and A. Torralba. SUN database: Large-scale scene recognition from abbey to zoo. In CVPR, 2010